author of Honorable Influence - founder of Mindful Marketing

A former student of mine, Kaylee Enck, recently messaged me to ask my opinion about a rom-com. I’m not the best person for questions about romantic cinema, but Kaylee wasn’t really interested in my perspective on the movie, Anyone But You; she wanted to know my thoughts about a very unconventional tactic used to promote the film, as she explained:

“The movie went viral because everyone thought the two leads had fallen in love with each other off-screen---even though both were in serious, committed relationships with other people at the time. They took it so far that the male lead's real-life finance actually called off their engagement. A few days ago, it was revealed that the whole thing was a marketing ploy invented by Sydney Sweeney, the lead actress and an executive producer on the film.”

With Kaylee’s clear event summary and some additional background from a link she provided, I was glad to offer my perspective:

Thank you for sharing this story. It seems like a very lowly strategy both because of the wide-spread intentional deceit and the negative impact on real relationships. As I think of broader issues involved, the strategy may reflect a growing tendency to put work ahead of the people in our lives and a willingness to do anything for money or fame.

Kaylee thanked me for my reply, and we could have been done there, but her question got me thinking . . . the markets for products related to love are many and huge! Besides certain movies genres, there are dozens of other products that are often, if not always, connected to love, for instance:

- Television shows: old ones e.g., the Love Boat, the Dating Game, soap operas, and new ones e.g., 90-Day Fiancé, the Bachelor, the Bachelorette, Golden Bachelor, Love Island

- Plays/musicals

- Songs: so much music has been written about love

- Books: romance novels

- Dating apps

- Greeting cards

- Flowers

- Candy

- Romantic dinners

- Jewelry: particularly engagement rings and wedding rings

- Clothing: wedding apparel, lingerie

- Wedding venues

- Wedding photography

- Honeymoons

- Perfume and cologne

- Toothpaste and mouthwash

- Teeth whitening

- Makeup

- Hair and skincare products

- Cosmetic surgery

There are likely more, but this is at least a good start for a list that can be categorized in several different ways e.g., goods vs. services, romantic love vs. friendship love. Another way to slice it is products that offer a direct, personal love benefit vs. a vicarious one i.e., enjoying someone else’s love experience. Dating apps and wedding rings are the former, while rom-coms and romance novels are the latter.

Is one of these value propositions (direct or vicarious) more moral than the other? Probably not. Just as it’s great that resorts offer honeymoon vacation packages for newlyweds, it’s nice that people who enjoy romance novels can read about couples going on their honeymoons. Buyers and sellers of both benefit without anything being inherently unethical.

Then, what’s wrong with a business model based on love?

That’s not a rhetorical question – There are, unfortunately, many specific ways such a model can be misappropriated, but the general downfall is when profit takes precedent over people and individuals are injured physically, emotionally, or relationally.

Sometimes called “the oldest profession,” prostitution is the classic example of such harm and the reason why historically most societies have considered harlotry immoral. Even if there are two ostensibly willing parties, this selling of “love” causes relational harm to family members of those involved in the act, as well as broader harm to the family as a societal institution.

Movie and TV show sex scenes are another example of potential harm. Even if camera angles and editing suggest more to physical intimacy than actually occurs, the actors involved in the loveless, commitment-less contact expose themselves to what may be lasting emotional harm, as Nedra Gallegos, an instructor at the Los Angeles Campus of the New York Film Academy, implies: “The narrative may be fictional, but the contact is real.”

Unlike the previous two examples, the issue with Anyone But You was not overtly sex but rather the costars putting the success of their movie ahead of their own real relationships/significant others. In this instance, the relational harm was direct, as suggested by the breakup of actor Glen Powell and his girlfriend Gigi Paris.

I'd shared with Kaylee my opinion of the movie’s marketing tactics, but I really wanted to hear hers, since she’s a communications and marketing professional who knows more about the rom-com genre than I do. Here’s her perspective, which is influenced by her Christian faith:

“What marketing really boils down to providing value to the consumer. What is more valuable to us as humans than love, though? When tapping into that sacred emotion, one has to do so cautiously, because no matter how hard we try, no product/service we offer can actually bring someone lasting love---only our relationships, especially our relationship with Christ, can provide that. Transparent, honest advertising, even if not as monetarily successful in the here-and-now, will always win out in the end.”

Her admonitions for transparency and not allowing anything to replace real relationships are great ones for everyone. Coincidentally, they are also consistent with some other Love Boat theme song lyrics that identify love as “life’s sweetest reward,” and that prioritize love that “won't hurt anymore.”

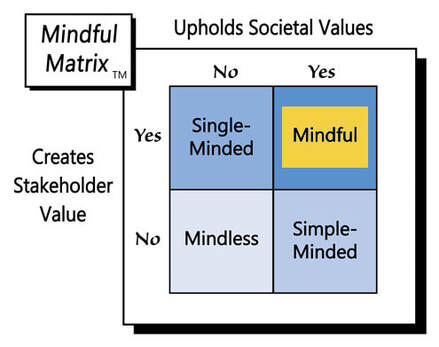

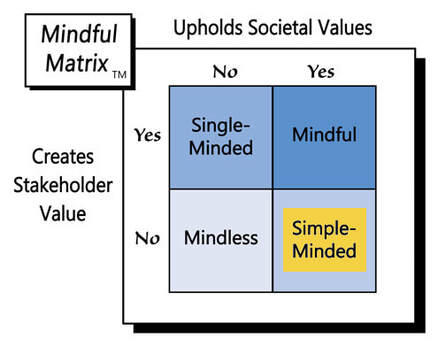

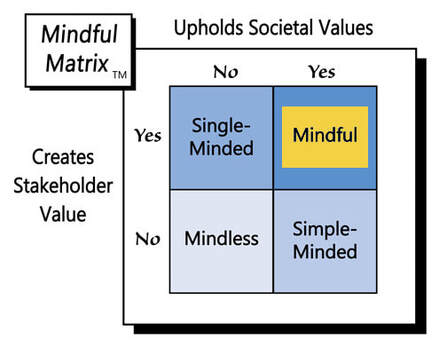

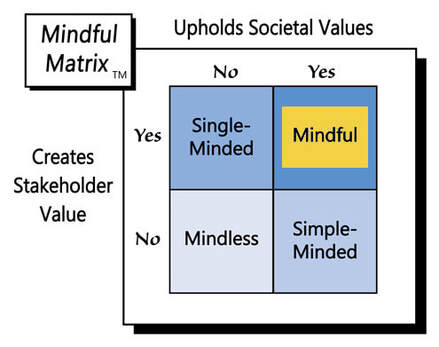

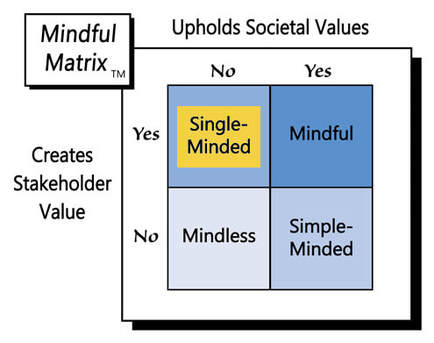

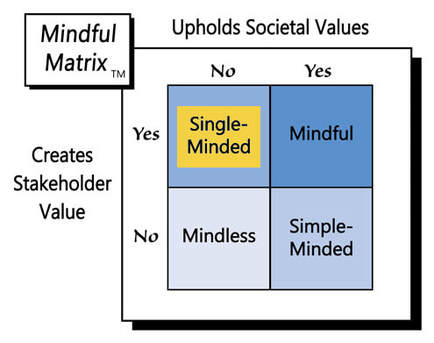

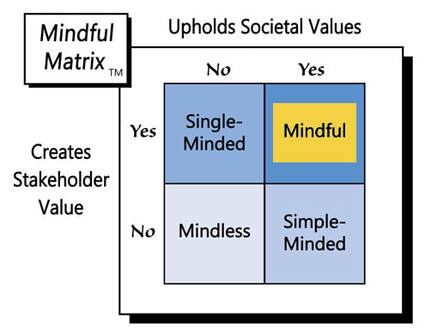

There are good ways that marketing can help start, strengthen, and celebrate real relationships, as well as provide edifying relationship-focused entertainment. However, even effective strategies that place profit ahead of people are “Single-Minded Marketing.”

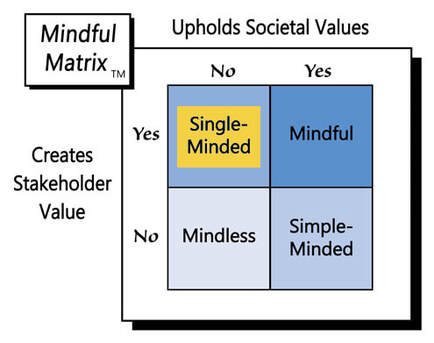

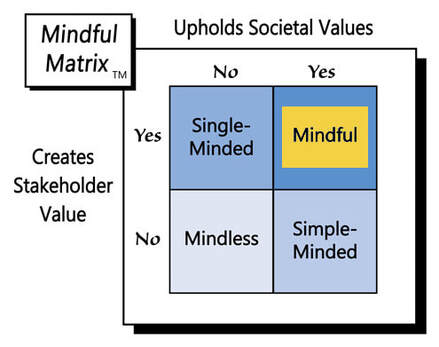

Learn more about the Mindful Matrix.

Check out Mindful Marketing Ads and Vote your Mind!

RSS Feed

RSS Feed